The Oversight Labs, a Kenyan digital rights group, has asked the Office of the Data Protection Commissioner (ODPC) to investigate whether footage captured by Ray-Ban Meta Smart Glasses is being used unlawfully to train artificial intelligence systems.

The request adds regulatory pressure on the global data supply chain that routes AI training work through Nairobi. It follows reports that contract workers in Kenya review images and videos recorded by the glasses to help train AI systems developed by Meta.

According to a formal complaint seen by TechCabal, the group asked the data regulator to examine whether people recorded by the glasses consented to their images and conversations being used to train Meta’s AI tools, and whether such processing complies with Kenya’s Data Protection Act.

The group also asked the ODPC to determine whether the devices could enable covert recording of people in public or private spaces without their knowledge.

The complaint could place Kenya’s role in the global AI supply chain under fresh scrutiny, as thousands of contract workers in Nairobi review images and videos used to train AI systems for major technology companies.

The petition follows an investigation by Swedish newspapers Göteborgs-Posten and Svenska Dagbladet, which reported that Kenyan workers employed by the outsourcing firm Sama review footage captured by the glasses to help train Meta’s AI systems.

The investigation said footage is collected from users of the smart glasses worldwide and then routed to data annotation teams who classify objects, scenes and behaviour to improve Meta’s AI models.

According to the complaint, material sent to data labellers may contain highly sensitive scenes, including bathroom visits, intimate encounters, bank card details and recordings of people watching explicit content.

Workers review and label the images and videos so Meta’s AI systems can recognise objects, activities and environments captured by the glasses, the document stated.

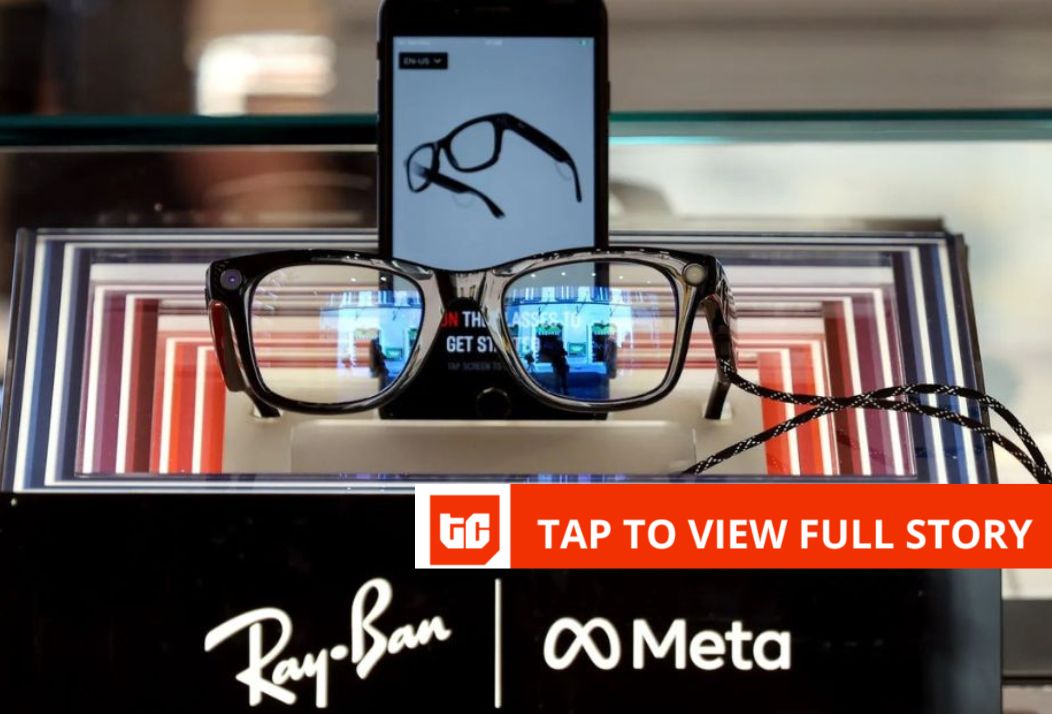

Ray-Ban Meta glasses allow users to capture photos and videos from a first-person perspective using built-in cameras and microphones. Some features are processed by Meta’s cloud-based AI services.

The Oversight Lab said the practice raises questions about whether people recorded by the glasses were informed that their personal data could be processed in Kenya and used to train AI systems.

“We are deeply concerned by the development of harmful technology through exploitation of vulnerable communities,” Mercy Mutemi, executive director of The Oversight Lab, said in a statement accompanying the request.

The group also asked the regulator to determine whether data collected through the glasses was transferred across borders and whether the companies involved carried out a data protection impact assessment before processing the information.

The smart glasses, developed by Meta in partnership with eyewear maker EssilorLuxottica, include cameras, microphones and an AI assistant that can capture photos and video from the wearer’s point of view.

The devices are part of Meta’s push into consumer artificial intelligence and wearable computing, an area many technology companies see as the next step after smartphones.

The Oversight Lab said it was in contact with data labellers who handled the material and were willing to provide evidence to the regulator anonymously.

The complaint also references earlier labour disputes involving Meta contractors in Kenya, where content moderators sued the company and its partners over working conditions tied to content review operations.

The group asked the data protection authority to complete its investigation within 90 days and determine whether the companies involved complied with Kenyan law.

The complaint places fresh attention on Kenya’s role in the global AI industry, where thousands of contract workers label images, video and text used to train machine-learning systems for major technology companies.

Kenya has become a major hub for such work because of its large English-speaking workforce and established outsourcing sector, though labour groups have raised concerns about low pay and exposure to harmful content.

Crédito: Link de origem